Consistent heuristic

In the study of path-finding problems in artificial intelligence, a heuristic function is said to be consistent, or monotone, if its estimate is always less than or equal to the estimated distance from any neighbouring vertex to the goal, plus the cost of reaching that neighbour.

Formally, for every node N and each successor P of N, the estimated cost of reaching the goal from N is no greater than the step cost of getting to P plus the estimated cost of reaching the goal from P. That is:

- and

where

- h is the consistent heuristic function

- N is any node in the graph

- P is any descendant of N

- G is any goal node

- c(N,P) is the cost of reaching node P from N

Informally, every node i will give an estimate that, accounting for the cost to reach the next node, is always lesser than or equal to the estimate at node i+1.

A consistent heuristic is also admissible, i.e. it never overestimates the cost of reaching the goal (the converse, however, is not always true). Assuming non negative edges, this can be easily proved by induction.[1]

Let be the estimated cost for the goal node. This implies that the base condition is trivially true as 0 ≤ 0. Since the heuristic is consistent, by expansion of each term. The given terms are equal to the true cost, , so any consistent heuristic is also admissible since it is upperbounded by the true cost.

The converse is clearly not true as we can always construct a heuristic that is always below the true cost but is nevertheless inconsistent by, for instance, increasing the heuristic estimate from the farthest node as we get closer and, when the estimate becomes at most the true cost , we make .

Consequences of monotonicity

[edit]

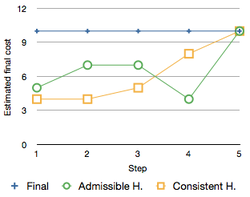

Consistent heuristics are called monotone because the estimated final cost of a partial solution, is monotonically non-decreasing along any path, where is the cost of the best path from start node to . It's necessary and sufficient for a heuristic to obey the triangle inequality in order to be consistent.[2]

The justification for to be monotonically non-decreasing under a consistent heuristic is as follows:

Suppose is a successor of , then for some action from to . Then we have that

hence .

In the A* search algorithm, using a consistent heuristic means that once a node is expanded, the cost by which it was reached is the lowest possible, under the same conditions that Dijkstra's algorithm requires in solving the shortest path problem (no negative cost edges). In fact, if the search graph is given cost for a consistent , then A* is equivalent to best-first search on that graph using Dijkstra's algorithm.[3]

With a non-decreasing under consistent heuristics, one can show that A* achieves optimality with cycle-checking, that is, when A* expands a node , the optimal path to has already been found. Suppose for the sake of contradiction that when A* expands , the optimal path has not been found. Then, by the graph separation property, there must exist another node on the optimal path to on the frontier. Since was selected for expansion instead of , this would mean that . But since f-values are monotonically non-decreasing along any path under the consistent heuristic function , we know that since is on the path to . This is a contradiction, meaning that should have been selected for expansion first instead of .[4]

In the unusual event that an admissible heuristic is not consistent, a node will need repeated expansion every time a new best (so-far) cost is achieved for it.

If the given heuristic is admissible but not consistent, one can artificially force the heuristic values along a path to be monotonically non-decreasing by using

as the heuristic value for instead of , where is the node immediately preceding on the path and . This idea is due to László Mérō[5] and is now known as pathmax. Contrary to common belief, pathmax does not turn an admissible heuristic into a consistent heuristic. For example, if A* uses pathmax and a heuristic that is admissible but not consistent, it is not guaranteed to have an optimal path to a node when it is first expanded.[6]

Relation to Local Admissibility

[edit]Modifying the consistency condition to h(N)−h(P) ≤ c(N,P) establishes a connection to local admissibility, where the heuristic estimate to a specific node remains less than or equal to the actual step cost. This ensures optimality when selecting local nodes, akin to how admissible heuristics ensure global optimality. By maintaining this property, the search process improves efficiency by making locally optimal decisions that contribute to the globally optimal solution.

See also

[edit]References

[edit]- ^ "Designing & Understanding Heuristics" (PDF).

- ^ Pearl, Judea (1984). Heuristics: Intelligent Search Strategies for Computer Problem Solving. Addison-Wesley. ISBN 0-201-05594-5.

- ^ Edelkamp, Stefan; Schrödl, Stefan (2012). Heuristic Search: Theory and Applications. Morgan Kaufmann. ISBN 978-0-12-372512-7.

- ^ Russell, Stuart; Norvig, Peter (Dec 1, 2009). Artificial Intelligence: A Modern Approach (3 ed.). New Jersey: Pearson Education. pp. 95–97. ISBN 0136042597. Retrieved 28 January 2025.

- ^ Mérō, László (1984). "A Heuristic Search Algorithm with Modifiable Estimate". Artificial Intelligence. 23: 13–27. doi:10.1016/0004-3702(84)90003-1.

- ^ Holte, Robert (2005). "Common Misconceptions Concerning Heuristic Search". Proceedings of the Third Annual Symposium on Combinatorial Search (SoCS). Archived from the original on 2022-08-01. Retrieved 2019-07-10.